Here’s the thing. Monero isn’t just another coin with a privacy checkbox. It feels different, because its privacy is baked in at a protocol level, not bolted on as an option. Initially I thought privacy coins were all smoke and mirrors, but then I dug into ring signatures and stealth addresses and my skepticism softened. On one hand privacy is a technical feature; on the other, it’s a social requirement for anyone who values financial autonomy, though that line is fuzzy sometimes.

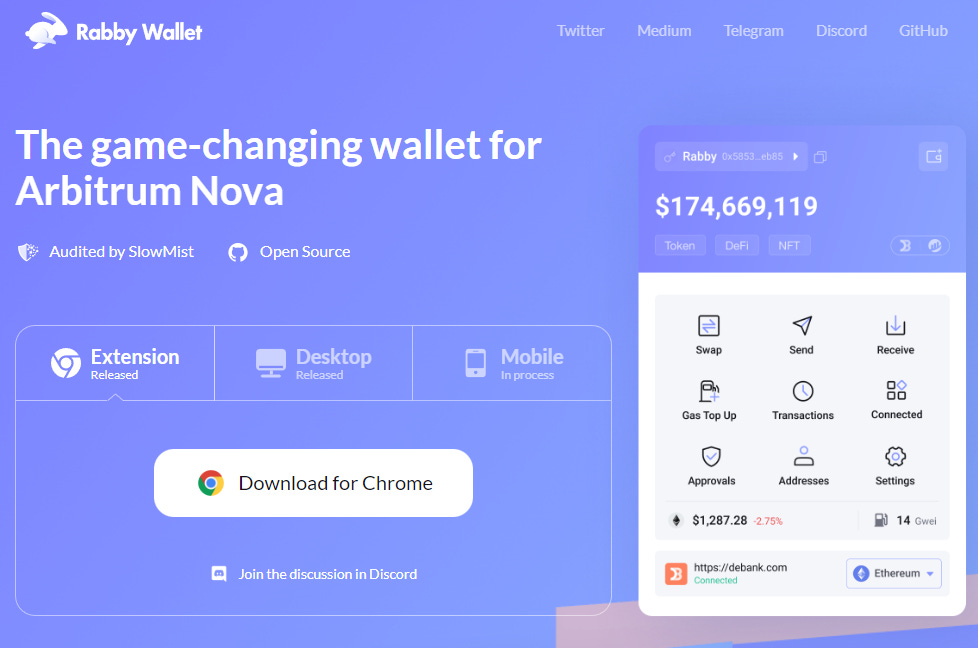

Whoa, seriously? My first run with the Monero GUI wallet surprised me. The GUI is simple enough for someone who isn’t a node jockey, yet it exposes the controls you need when you care about privacy. I’ll be honest—some parts of the UX bug me, but they don’t compromise core privacy, and that’s what counts. My instinct said to run a local node, but then I realized syncing can be painless if you plan a little.

Hmm… somethin’ felt off the first time I used a remote node. I got faster sync, but my threat model changed, because a remote node can see which outputs my wallet requests. On the other hand, for casual use, remote nodes are a practical trade-off; though actually, if you want the strongest privacy, run your own node. Something else to remember is that a local node also contributes to the Monero network, which matters.

Okay, so check this out—rings, stealth addresses, and bulletproofs all work together. Ring signatures hide which input in a transaction is actually being spent by blending it with several decoys, so observers can’t trivially point to your spend. Stealth addresses create one-time destination addresses for each incoming payment, meaning public ledgers don’t show a static address you reuse forever. Bulletproofs compress range proofs to keep transactions smaller and cheaper, while maintaining confidentiality for amounts, which is neat and practical.

Really? You can tweak more than you think. The GUI wallet lets you choose your mixin/ring size defaults, view key image management, and inspect incoming/outgoing transactions, without exposing private view keys to strangers. Initially I thought exposing a view-only wallet was harmless, but then I saw how view-only access can leak patterns if you mis-handle backups. So back up everything, multiple times, and store mnemonics securely in different locations.

How I handle anonymous transactions and the Monero GUI wallet (and where to get a clean build)

Here’s the thing. If you want a straightforward place to start, grab a verified copy via a trusted download link to avoid tampered binaries. I usually recommend checking signatures and hashes before launching any wallet, and yes, the installer you fetch should be verified; you can get a standard distribution via a link like monero wallet download which points to a convenient starting place. On a practical level, the GUI walks you through creating a wallet, restoring from seed, and connecting to a local or remote node, which is great for folks migrating from custodial services. Remember—using a remote node reduces your privacy slightly, and running your own node is better if your goal is maximum anonymity and trust-minimization.

Whoa! Little habits matter a lot. Use separate wallets for different purposes—savings, everyday spending, and any experimental stuff—so linking is harder for outside observers. Initially I lumped everything together, and later I regretted it, because mixing contexts makes deanonymization easier if someone analyzes spending patterns. On the other hand it’s inconvenient to juggle many wallets, though actually the trade-off is worth it for serious privacy. I keep an encrypted USB with cold-wallet seeds for long-term holdings, and a hot wallet for small, day-to-day amounts.

Here’s the thing. Network-level protections like Tor or i2p make fingerprinting harder. The GUI supports proxying through Tor, and when combined with a local node you get layered privacy benefits, though latency can increase and setups may get fiddly. I’m biased toward Tor because it’s widely supported and relatively easy to use on desktop systems. That said, I occasionally run into subtle leaks if I’m careless with DNS or if apps on my machine behave oddly, so segregating environments helps—use a dedicated machine or VM if you can.

Seriously? Address reuse is the enemy. It seems obvious, but reusing addresses or patterns of reuse makes you trackable. Create fresh addresses for each counterpart or transaction, and monitor outgoing transaction patterns to ensure nothing links back in obvious ways. Initially I underestimated the importance of unique addresses for privacy when I was testing, but after a couple of mistakes my approach hardened. Pro tip: the GUI’s integrated address book is handy for labeling while keeping unique subaddresses for payments.

Hmm… trust and verification matter more than most users realize. Verify the wallet binaries, check PGP signatures when available, and prefer releases signed by core contributors. Something else: community channels and release notes often flag security issues early, so skim them. I’m not 100% sure you can eliminate every vector, but taking these steps reduces risk significantly, and that’s the goal.

FAQ: Quick answers for common concerns

Is Monero truly anonymous?

Here’s the thing. Monero provides strong privacy primitives by default—ring signatures, stealth addresses, and confidential transactions combine to hide senders, recipients, and amounts. However, absolute anonymity depends on your whole operational security stack, including how you obtain funds, which nodes you use, and how you handle metadata. Initially some folks assume protocol-level privacy equals total anonymity, but real privacy is a system property, not just a feature.

Should I run a local node or use a remote node?

Whoa! If you want maximal privacy and don’t mind the disk and bandwidth, run a local node. It gives you trust-minimized validation and helps the network. If convenience trumps everything, remote nodes are okay for small amounts, though they introduce a new trust vector. Personally I run a local node at home and use a remote node only in emergencies.

What about backups and seeds?

Really? Backups are everything. Write your mnemonic seed on paper (or metal if you fear fire), store copies in separate secure locations, and consider a passphrase for extra protection. If you lose the seed, you lose access—period. Also check recovery by restoring to a test device before relying solely on backups.